Apple AirPods Pro 2: Review of Hearing Aid Functionality

Reviews, Prices, and Sound Samples

Advancements in today’s hearing technology are often focused on making things more affordable and accessible to people who have hearing loss, and encouraging more people to evaluate and manage their hearing healthcare.

That’s because so few people who could benefit from amplification are using hearing aids. For example, here in the UK, about 6.7 million people could benefit from hearing aids but less than one-third (about 2 million people) use them. A similar percentage of the population in the United States—about one-third of those over age 70—who could benefit from hearing aids actually use them.

If you've seen any of my other recent product reviews, such as the Lexie B2 Powered by Bose or the Sony OTC hearing aids, you know how passionate I am about bringing hearing aid technology into as many people's ears as possible—even if it's not the typical process of having hearing aids fitted by an audiologist like me. While I still strongly recommend seeing an audiologist, I understand that hearing aid costs can be prohibitive. For example, in the United States, the average hearing aid price is $2372 each and the devices are usually not covered by insurance.

Can You Use Apple AirPods Pro 2 as Hearing Aids?

Recognizing this, Apple has been progressively improving and adding capabilities to their operating system (iOS) and AirPods Pro 2 for those with hearing loss. Over the last few years, they've introduced some pretty smart technology to ensure that they stand out in what is, without a doubt, a crowded market.

)

Apple AirPods Pro

As a result, there is a huge amount of tech crammed into the AirPods Pro 2, which means that if you're an Apple user and have a set of them, you may already possess what you need to improve your hearing—today! And that does include some hearing-aid-like, or what’s now referred to as “hearable,” functionality.

Before, we get into using AirPods Pro 2 as a listening enhancement device, it’s worth noting that this functionality is really suitable only for people who have mild to moderate hearing loss–but that’s the lion’s share of hearing aid users. However, for more severe hearing losses (i.e., about 65 dBHL or more on a hearing test), the amplification just isn’t strong enough.

How to Set Up Apple AirPods Pro as Hearing Aids

On my iPhone, I'm using iOS 16.1, which includes features designed to assist people with mild to moderate hearing loss. There is a special section on Apple's website covering all their accessibility features for folks with hearing loss.

In this video, HearingTracker Audiologist Matthew Allsop explains how to set up your Apple Airpods Pro as hearing aids. Closed captions are available on this video. If you are using a mobile phone, please enable captions clicking on the gear icon.

So let’s go over each of the essential features of AirPods Pro 2 if you wish to use them for hearing enhancement. To access these features on your iPhone, follow this bread-crumb trail:

Settings app > Accessibility > Audio Visual

When you get to the Audio Visual screen, you'll discover some of Apple’s hearing-related features, such as:

Background sounds: Plays background sounds to mask environmental noise and may be helpful for people with tinnitus.

Mono audio: Useful if you have hearing only in one ear.

Sound balance: Helps balance the level of volume between your ears.

However, the best features are located within the Headphone Accommodations section at the top of the menu, and we’ll focus on these later in this article.

How to get and/or upload your hearing test

To get the most out of your AirPods Pro 2 hearing features through the 'Headphone Accommodations' section, you’ll first need to share your hearing test results with your iPhone.

Starting with iOS 14, Apple allows you to enter your hearing test results using two basic methods, both of which are found in the Apple Health App which comes with your iPhone.

)

Open the Apple Health App by clicking on the app icon with a red heart.

You can then use this bread-crumb trail on your Apple Health App to get to the Audiogram section:

Browse (at the bottom of screen) > Hearing > Audiogram

There are two methods for obtaining and entering your hearing test results:

Method #1: Upload an audiogram that you already have.

By far, the most accurate way to obtain a proper hearing test and audiogram is via a hearing care professional (HCP). Once you get your audiogram from them, you can take a photograph of it using the iPhone camera, and then enter the information directly into the Audiogram section. If you submit a photo, it does the same thing.

Method #2: Test your hearing using the Mimi App, and share your results with your Apple Health app

There are several free online apps that allow you to test your hearing. One of the best and most popular is the Mimi Hearing Test which can be found toward the bottom of the “Audiogram” screen. Once you download this app, you’ll see a button that allows you to share your hearing test information with the Apple Health App. You can then take the hearing test in a very quiet place, and the information will be transferred to your iPhone for customized hearing.

Because it’s probably the most common way for users to set up the Airpods Pro 2 as a hearing aid, I also plan to provide a mini-review of the Mimi Hearing Test app. In a nutshell, I have some reservations about recommending this method for customizing your AirPods 2, because you’ll benefit so much more by seeing an audiologist. However, it is certainly better than doing nothing!

)

The best way to get your AirPods Pro 2 personalized for your unique hearing loss is to obtain an audiogram from a hearing care provider.

What happens after uploading your hearing test

If your test results show the same hearing loss in each ear, the headphone accommodations will take the average of the two ears and apply that profile to the appropriate audio channels. However, if you have an uneven hearing loss, the left and right audio channels will be positioned towards your better ear. So, once your hearing test is uploaded into your iPhone's health area, it will display neatly in the Headphone Accommodations, instantly boosting sound reproduction.

How do your test results affect the sound of the earbuds? When you stream sounds from your phone to your AirPods Pro—such as phone calls, music, videos, the radio, or podcasts—your phone will automatically adjust the sound at the specific pitches (frequencies) to compensate for your hearing loss.

Hearing-aid-like features of the Airpods Pro 2

When wearing the AirPods Pro, you can switch between Active Noise Cancellation and Adaptive Transparency (on and off). You should know that both of these modes work best when the devices fit well and you’re using the optimal-size eartips supplied with the earbuds.

I won’t go into detailed instructions about how to employ these features because there are several different ways they can be accessed in your AirPods Pro menu using Apple’s own instructions. For example, you can gain access to several of the listening and Headphone Accommodations features by pressing AirPods in the Accessibility screen and then choosing Audio Accessibility Settings. You can also go to the Bluetooth menu and click on the blue “information” icon to access the Ear Tip Fit Test, quickly turn on/off Noise Cancellation and Adaptive Transparency, etc.

Note that some of these areas could change soon with the introduction of AirPods Pro 2 (or whatever name Apple chooses for their second-generation product line).

Active Noise Cancellation

The most prominent feature is Active Noise Cancellation, which detects external sound using the AirPods Pro's outward-facing microphones. Your AirPods then counter this noise by a process called “phase inversion,” which essentially means they generate a sound wave that is the mirror image of the noise. This effectively cancels out external noises before you can hear them. After that, there's an inward-facing microphone that listens within your ear for undesired internal sounds, which are subsequently canceled, leaving you with only a limited range of sounds from your surroundings.

Adaptive Transparency provides personalized amplification

In a way, Adaptive Transparency is the opposite of Active Noise Cancellation; it lets environmental sounds into your ears, allowing you to hear everything that's going on around you. And, to be honest, it's a little strange when you first hear it because your brain tells you that your ears are blocked, but you can still hear everything!

As you click on and move into the Customize Adaptive Transparency setup, you can further change the volume or different facets of the sound. You can adjust the left or right balance, and place greater emphasis on the bass or treble of whoever's speaking in front of you while wearing the AirPods. The bass contributes to the fullness and richness of a person’s voice, while the treble accentuates the clarity of their voice.

Finally, you can set the ambient noise reduction, which lets you control the amount of background noise picked up by the AirPods Pro from the world around you.

Better loud noise handling

The new Adaptive Transparency feature offers better listening comfort and protects against sudden, intense noises. This is a feature most advanced hearing aids possess. According to Apple Senior Engineer Mary-Ann Rau, this will dynamically reduce environmental noise—like sirens, construction jackhammers, or even loudspeakers at a concert—for more comfortable everyday listening, with the sound being analyzed at 48,000 times per second to react instantaneously to any loud noise.

Conversation Boost

Conversation Boost is tucked down at the bottom of the menu, but I believe it should be at the top! Apple claims that this feature focuses your AirPods Pro on the person in front of you by using computational audio and directional beamforming microphones—features we've traditionally seen in hearing aids. This provides forward-facing directionality with an ultra-narrow beam, isolating sounds coming from in front of you so you can hear more clearly in noisy environments.

)

Conversation Boost adds directional hearing.

How to enable Conversation Boost:

- Settings app > Accessibility > Audio/Visual

- Tap and Enable Headphone Accommodations

- Tap Adaptive Transparency

- Enable Conversation Boost

Are the Apple Airpods useful for people with hearing loss?

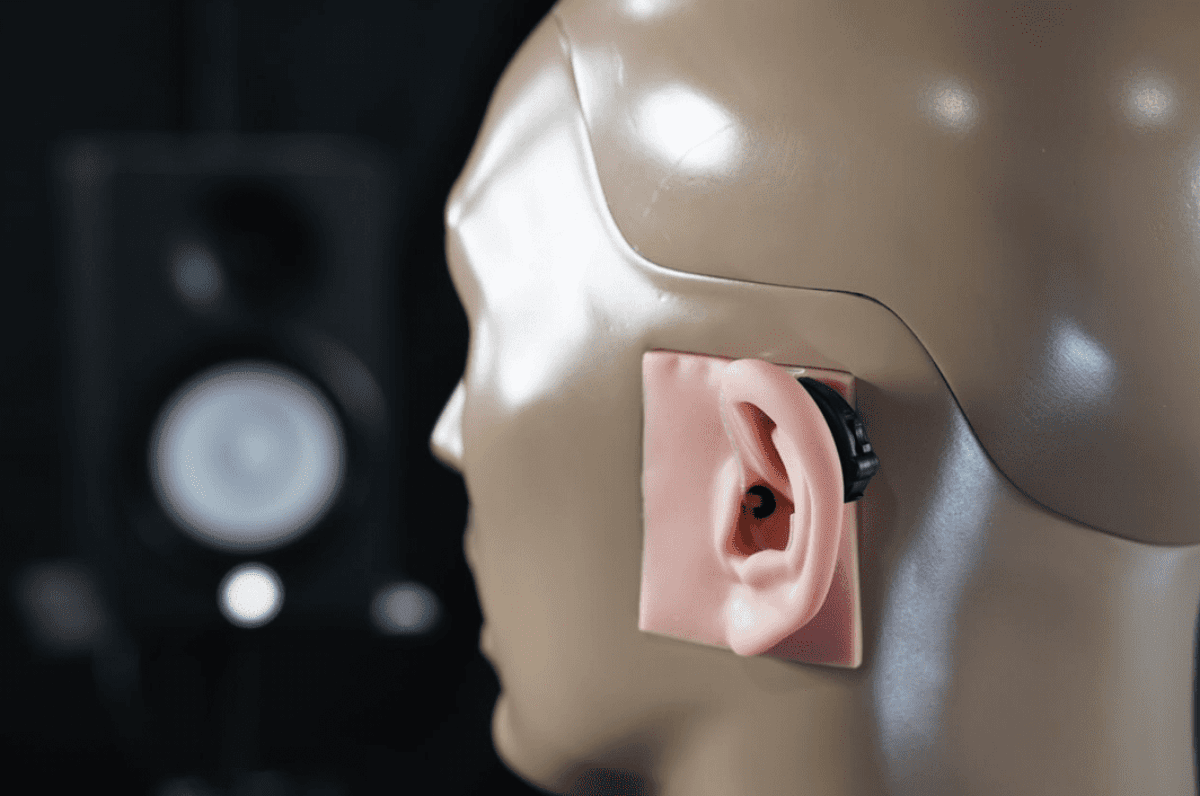

Some pretty intriguing objective studies have been published by audiologists evaluating the effectiveness of the AirPods Pro in boosting conversations and helping people hear better in noise.

For example, a recent study (March 2022) was published by a group of respected researchers from the National Acoustic Laboratories (NAL) in Australia. They found that both the Conversation Boost and Ambient Noise Reduction features of AirPods Pro improved hearing in background noise by around 7 decibels when measuring speech in front a listener in a noisy environment. In other words, it works quite well!

The researchers concluded: “With these hearing aid-like features, AirPods Pro have the potential to help some people with hearing loss understand and communicate during conversations.” (Also see NAL’s earlier review of Apple’s Headphone Accommodations feature.)

)

AirPods Pro and the Health App on iPhone

What are the key disadvantages when using AirPods Pro 2 as a hearing aid?

The Airpods Pro might be sounding pretty good to you right now, but there are a few things to keep in mind if you're thinking about using them as hearing aids.

Battery life

First and foremost, the AirPods Pro have a very limited battery life. They are only good for about 6 hours of use, and less if you use spatial transparency, adaptive transparency, conversation boost, or hands-free calling. While this is 1.5 hours more than AirPods Pro v1, it's still not really sufficient for hearing aid use. You don't want to start wearing a hearing aid at 8 AM and have it die before lunchtime.

Traditional hearing aids are far better in this regard: a 3-4 hour charge typically done overnight should provide for around 16-24 hours of use based on that full charge.

The AirPods Pro's batteries are also non-replaceable. So, after a couple of years of heavy daily use, your battery life will be significantly reduced. Hearing aids with rechargeable batteries will also gradually lose power in the same way; however, you can usually get the batteries replaced, often without cost depending on the warranty and your HCP. There are also many hearing aids that use disposable button-cell batteries, and these are simply replaced as needed.

Own-Voice Issues

Another issue with using headphones to manage hearing loss is the occlusion effect, which relates to how you hear your own voice when talking. With age-related hearing loss, the user often has good low-pitch (low-frequency) hearing and will wish to have their ear canals as open as possible for a more natural listening experience. With the AirPods Pro blocking your ears, my concern is that it will feel like you have your fingers jammed in your ears all the time, which may not be ideal. For example, when speaking or eating, you'll perceive your voice to be “boomy” and confined inside your head; chewing might sound much louder than normal. Depending on your hearing ability, this could limit the benefit of using the AirPods Pros as hearing aids.

However, the Adaptive Transparency is beneficial in this regard. For the first time in any analogous technology I've evaluated, the occlusion effect is significantly reduced when the transparency option is enabled. This is likely the result of Apple's use of Active Occlusion Control (AOC), which measures the user's voice using internal microphones, and uses phase inversion to reduce the boominess often experienced with closed-canal hearing aids. Apple also uses physical vents to improve the own voice effect.

No lossless audio, and no mention of Bluetooth LE Audio or Auracast

Apple made no mention of support for lossless audio in their AirPods Pro 2 launch event or press release. Implementing lossless audio would allow the new earbuds to reproduce streamed music bit-for-bit the same as the original audio file, resulting in extremely high-quality sound. As Apple Music currently offers lossless audio, it’s possible AirPods Pro 2 may offer it via its Apple Lossless Audio Codec (ALAC) codec at a future date.

And while Airpods Pro 2 use Bluetooth 5.3 wireless technology, there was no mention of Auracast, a Bluetooth Low-Energy (LE) Audio technology released in June that is expected to become the “next generation” assistive listening technology. The Auracast platform will enable an audio transmitter, such as a smartphone, laptop, television, or public address (PA) system, to broadcast audio to an unlimited number of nearby Bluetooth audio receivers—including hearing aids, earbuds, and similar Auracast-enabled devices.

Although Bluetooth is becoming prevalent in hearing aids and Auracast could ultimately replace the venerable telecoil, we anticipate the telecoil will coexist with Auracast for many years to come. As with lossless audio, Apple could be planning to announce Auracast compatibility at a future date.

Improvements in 2nd generation AirPods Pro

In a press release Apple said that AirPods Pro 2 “offer richer bass and crystal-clear sound across a wider range of frequencies” thanks to a “new low-distortion audio driver and custom amplifier.” Substantial improvements in sound quality could mean improvements in speech clarity for those attempting to use AirPods Pro 2 as hearing aids. And Apple’s new H2 chip—which replaces the H1 chip found in the original AirPods Pro—delivers twice as much Active Noise Cancellation (ANC) with the help of optimally-placed microphones and acoustic vents. And as discussed above, Apple has also improved battery life and handling for loud noises while in Adaptive Transparency.

My verdict on using Airpods Pro 2 as hearing aids

In conclusion, I believe that the Airpods Pro 2 is a great “first foray” into managing hearing loss. Still, they should be fitted with caution because the hearing testing process using external apps is not currently as accurate as it should be. The best thing to do would be to have a complete hearing evaluation with an audiologist and then use that audiogram for the fitting procedure.

)

Using AirPods Pro as hearing aids

I'm also not convinced that the world is ready for people to walk around with their headphones on. Would people think I was being disrespectful or rude—believing I’m listening to music instead of them—when wearing headphones and sitting down for dinner with them? If they knew I was wearing hearing aids, I’m pretty sure they wouldn’t be. However, I suppose this new idea of using headphones as hearing aids must begin somewhere. So we may be marching toward a new perception of what headphones are and when/where they’re acceptable.

While I don't believe AirPods Pro 2 will be as effective as traditional hearing aids for all of the reasons I've discussed, I'm thrilled that Apple is making waves in hearing technology. And I would advise anyone with hearing loss to try amplification instead of doing nothing.

So, if you're not sure a regular hearing aid is for you because of the cost or you just aren't ready to wear a hearing aid, the Airpods Pro 2 is a low-risk way to dip your toes into the waters of hearing amplification—especially since they're only $249 versus thousands of dollars for professionally fit hearing aids. In my opinion, they are an excellent first intermediary step toward acquiring regular hearing aids.

Table of Contents

HearAdvisor partners with HearingTracker to provide objective laboratory performance data. All hearing aids are fitted and performance-tested for mild sloping to moderate hearing loss. All audio samples cutoff above 10kHz. *Specific model tested: Apple AirPods Pro 2.

Apple AirPods Pro 2 Physical Specifications

| Apple AirPods Pro 2 | |

|---|---|

|

|

| Rating |

100%

1 review |

| Accelerometer | |

| Bluetooth® Audio |

Protocol

|

| Gyroscope | |

| Hands-Free Calling |

Protocol

|

| IP Rating (Liquid) | 4 |

| Rechargeable Batteries |

Battery Type Lithium-ion |

| Tap Controls |

Tap Options

|

| Voice Assistant |

Voice Assistant

|

| Volume Rocker |

Model details listed above may be incomplete or inaccurate. For full specifications please refer to product specifications published by the original equipment manufacturer. To suggest a correction to the details listed, please email info@hearingtracker.com.

Apple AirPods Pro 2 Technology Details

| Apple AirPods Pro 2 | |

|---|---|

| Price | $199 / pair |

| Rating |

100%

1 review |

| Active Noise Cancellation (ANC) | |

| Self-fitting |

Tuned based on hearing test through device Tuned based on audio preference selections Tuned by user directly |

| Spatial Audio | |

| Active occlusion cancellation (AOC) | |

| Adaptive EQ | |

| Adaptive Transparency | |

| Conversation Boost | |

| Headphone Accommodations |

Technology specifications listed above may be incomplete or inaccurate. For full specifications please refer to product specifications published by the original equipment manufacturer. To suggest a correction to the details listed, please email info@hearingtracker.com.

Apple AirPods Pro 2 Reviews

Hearing aid reviews are fundamentally different from reviews for most other consumer electronic products. The reason is because individual factors, like degree of hearing loss, have a profound effect one's success and overall satisfaction with the product. When purchasing a hearing aid, you'll need to consider more than just your hearing outcome ... Continue reading

Write a ReviewNo reviews match those filters.

Try broadening your search.

Overall Ratings

Hearing Tracker uses a ten-question survey to assess consumer feedback on hearing aids. The percentage bars below reflect the average ratings provided per question.

Note: Original answers provided in star rating format.

I wasn't ready to buy a hearing aid yet. Honestly, didn't want to step foot in a hearing aid place or even admit that I have a problem... I saw the video about airpods pro 2 working like hearing aids and decided to give it a try. At first I had a few audio dropouts but Apple seems to have fixed that with the latest firmware, and in terms of helping me hear, they definitely do that. I don't know that I want to take these out to dinner yet though.

Use the form below to leave a quick comment about the Apple AirPods Pro 2. Alternatively, consider providing more thorough feedback using our hearing aid review system. If you review your hearing aids using our review system, we'll send you a $5 promotional credit for use in our hearing aid battery shop as a special thank you. Review your hearing aids now.

I was wondering if it will be able to correct, while only in one ear?

my hearing loss only in one side

do you know is there a function like that for the quiet comfort bose?

My son is deaf in one ear.

Do these airpods have cross-over capabilities.

meaning sound from his deaf ear is sent to the airpod in his good ear.